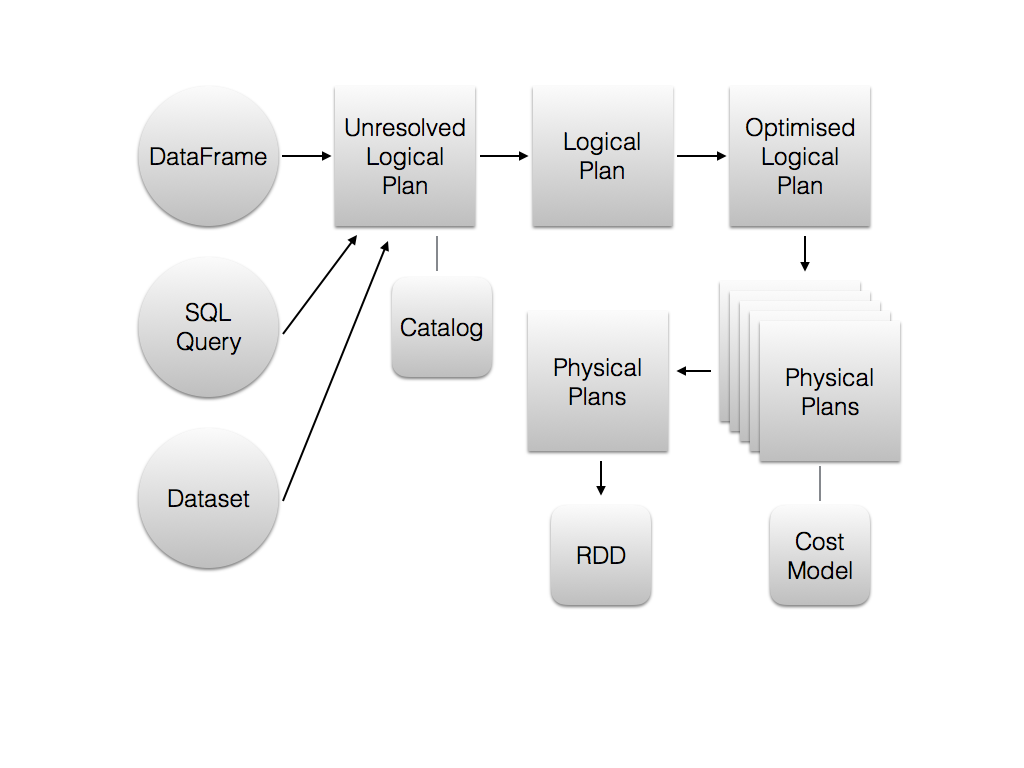

So how does the optimizer work? The following figure shows the core components and how they are involved in a sequential optimization process:

First of all, it has to be understood that it doesn't matter if a DataFrame, the Dataset API, or SQL is used. They all result in the same Unresolved Logical Execution Plan (ULEP). A QueryPlan is unresolved if the column names haven't been verified and the column types haven't been looked up in the catalog. A Resolved Logical Execution Plan (RLEP) is then transformed multiple times, until it results in an Optimized Logical Execution Plan. LEPs don't contain a description of how something is computed, but only what has to be computed. The optimized LEP is transformed into multiple Physical Execution Plans (PEP) using so-called strategies. Finally, an optimal PEP is selected to be executed using a cost model by taking statistics about the Dataset to be queried into account. Note that the final execution...