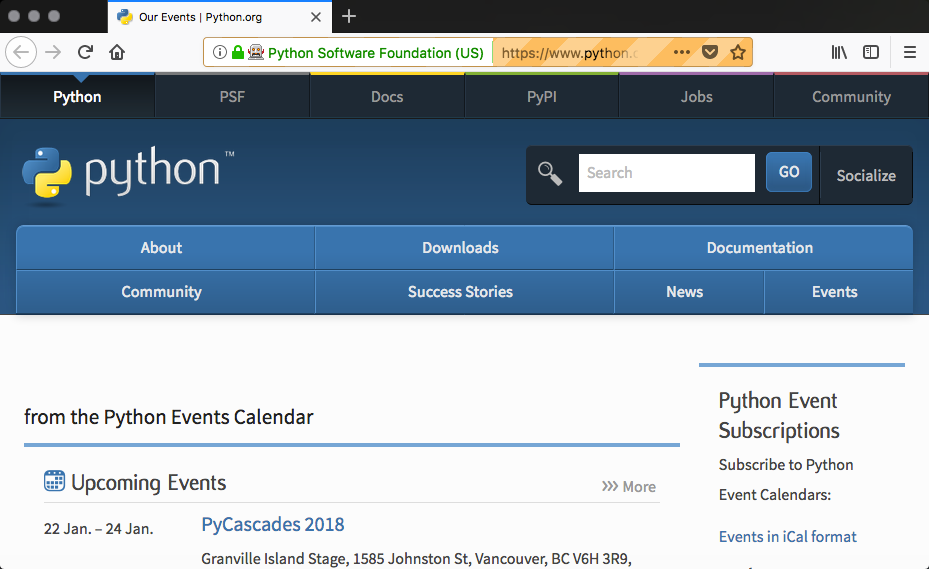

This recipe will introduce Selenium and PhantomJS, two frameworks that are very different from the frameworks in the previous recipes. In fact, Selenium and PhantomJS are often used in functional/acceptance testing. We want to demonstrate these tools as they offer unique benefits from the scraping perspective. Several that we will look at later in the book are the ability to fill out forms, press buttons, and wait for dynamic JavaScript to be downloaded and executed.

Selenium itself is a programming language neutral framework. It offers a number of programming language bindings, such as Python, Java, C#, and PHP (amongst others). The framework also provides many components that focus on testing. Three commonly used components are:

- IDE for recording and replaying tests

- Webdriver, which actually launches a web browser (such as Firefox, Chrome, or Internet Explorer) by sending commands and sending the results to the selected browser

- A grid server executes tests with a web browser on a remote server. It can run multiple test cases in parallel.