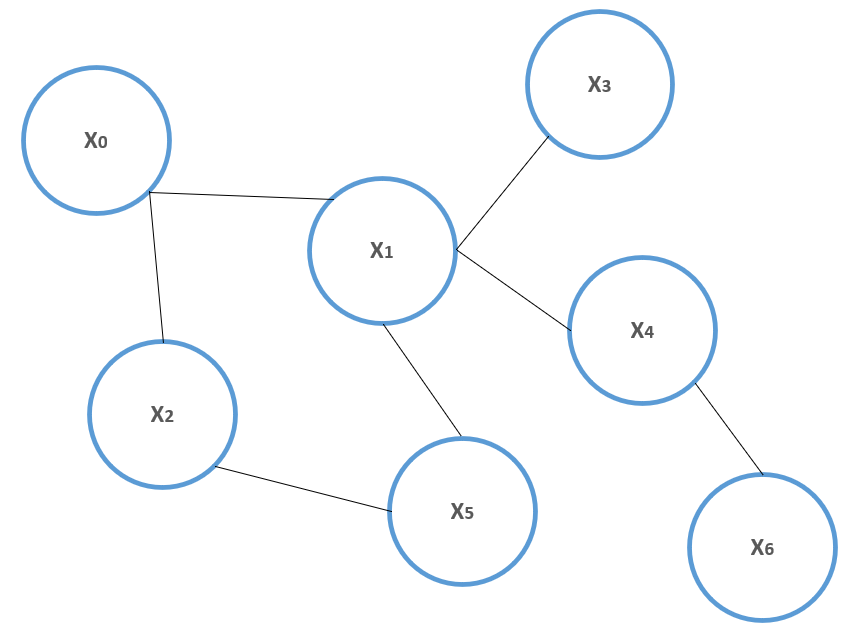

Let's consider a set of random variables, xi, organized in an undirected graph, G=(V, E), as shown in the following diagram:

Example of a probabilistic undirected graph

Two random variables, a and b, are conditionally independent given the random variable, c if:

Now, consider the graph again; if all generic couples of subsets of variables Si and Sj are conditionally independent given a separating subset, Sk (so that all connections between variables belonging to Si to variables belonging to Sj pass through Sk), the graph is called a Markov random field (MRF).

Given G=(V, E), a subset containing vertices such that every couple is adjacent is called a clique (the set of all cliques is often denoted as cl(G)). For example, consider the graph shown previously; (x0, x1) is a clique and if x0 and x5 were connected, (x0, x1, x5) would be a clique. A maximal clique is a clique that cannot be expanded by adding new vertices. A particular family of MRF is made up of all those graphs whose joint...