Mounting ADLS Gen2 and Azure Blob storage to Azure DBFS

Azure Databricks uses DBFS, which is a distributed file system that is mounted into an Azure Databricks workspace and that can be made available on Azure Databricks clusters. DBFS is an abstraction that is built on top of Azure Blob storage and ADLS Gen2. It mainly offers the following benefits:

- It allows you to mount the Azure Blob and ADLS Gen2 storage objects so that you can access files and folders without requiring any storage credentials.

- You can read files directly from the mount point without needing to provide a full storage Uniform Resource Locator (URL).

- You can create folders and write files directly to the mount point.

- Data written to the mount point gets persisted after a cluster is terminated.

By the end of this recipe, you will have learned how to mount Azure Blob and ADLS Gen2 storage to Azure DBFS. You will learn how to access files and folders in Blob storage and ADLS Gen2 by doing the following:

- Directly accessing the storage URL

- Mounting the storage account to DBFS

Getting ready

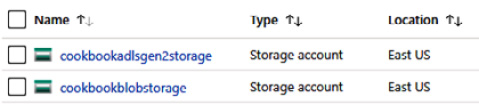

Create ADLS Gen2 and Azure Blob storage resources by following the links provided in the Technical requirements section. In this recipe, the names of the storage resources we are using will be the following:

cookbookadlsgen2storagefor ADLS Gen2 storagecookbookblobstoragefor Azure Blob storage

You can see the Storage Accounts we created in the following screenshot:

Figure 2.1 – Storage accounts created in the CookbookRG resource group

Before you get started, you will need to create a service principal that will be used to mount the ADLS Gen2 account to DBFS. Here are the steps that need to be followed to create a service principal from the Azure portal:

- Application registration: You will need to register an Azure Active Directory (AAD) application. On the Azure portal home page, search for Azure Active Directory and select it. On the Azure Active Directory page, in the left pane, select App registrations and click on New registration:

- On the Register an application page, give any name to the application you are creating, leave the other options at their default values, and click Register:

Figure 2.3 – New application registration page (continued)

- Once an application is created, you will see it listed on the App registrations page in AAD, as seen in the following screenshot:

Figure 2.4 – New application created

- Select the new application you have created and get the application identifier (ID), and the tenant ID for the application that will be used for mounting the ADLS Gen2 account to DBFS:

Figure 2.5 – Getting application ID and tenant ID

- To create a secret, click on Certificates & secrets under the Manage heading and click on the + New client secret option listed under Client secrets. You can provide any description for the secret and provide expiry as 1 year for this exercise:

Figure 2.6 – Adding client secret for the application

- As soon as you create a secret, ensure you copy the value of the secret, else you cannot get the value of the existing secret later. You will have to create a new secret if the secret value is not copied immediately after it is created:

Figure 2.7 – Client secret value page

- You now have an application ID, a tenant ID, and a secret—these are required to mount an ADLS Gen2 account to DBFS.

Once the application is created, we need to provide Blob storage contributor access to ADLSGen2App on the ADLS Gen2 storage account. The following steps demonstrate how to provide access to the ADLS Gen2 storage account:

- From the Azure portal home page, go to the

CookbookRGresource group and select thecookbookadlsgenstorage(ADLS Gen2 storage) account you have created. Click Access Control (IAM) then click on + Add, and select the Add role assignment option. On the Add role assignment blade, assign the Storage Blob Data Contributor role to our service principal (that is,ADLSAccess):

Figure 2.8 – Adding permissions to ADLS Gen2 for service principal

- Under Add role assignment, select a role and access for

ADLSGen2App, as shown in the following screenshot, and click on the Save button:

Figure 2.9 – Adding permissions to ADLS Gen2 for service principal

We require a storage key so that we can mount the Azure Blob storage account to DBFS. The following steps show how to get a storage key for the Azure Blob storage account (

cookbookblobstorage) we have already created. - From the Azure portal home page, go to the

CookbookRGresource group and select thecookbookblobstorage(ADLS Blob storage) account you have created. Click on Access keys under Settings and click on the Show keys button. The value you see for thekey1key is the storage key we will use to mount the Azure Blob storage account to DBFS:

Figure 2.10 – Azure Blob storage account access key

- Copy the value of

key1, which you will see when you click on Show keys. The process of getting a storage key is the same for an Azure Blob storage account and an ADLS Gen2 storage account. - You can find the notebook that we will be using to mount Azure Blob storage and ADLS Gen2 in the

Chapter02folder of your local cloned Git repository. - After you import the following two notebooks, you can follow along with the code in the two notebooks for this recipe:

(a)

2-1.1.Mounting ADLS Gen-2 Storage FileSystem to DBFS.ipynb(b)

2-1.2.Mounting Azure Blob Storage Container to DBFS.ipynb - Create a container named

rawdatain both thecookbookadlsgen2storageandcookbookblobstorageaccounts you have already created, and upload theOrders.csvfile, which you will find in theChapter02folder of your cloned Git repository.Note

We have tested the steps mentioned in this recipe on Azure Databricks Runtime version 6.4 which includes Spark 2.4.5 and on Runtime version 7.3 LTS which includes Spark 3.0.1.

How to do it…

The following steps show how to mount an ADLS Gen2 storage account to DBFS and view the files and folders in the rawdata folder:

- Launch a Databricks workspace, open the

2_1.1.Mounting ADLS Gen-2 Storage FileSystem to DBFS.ipynbnotebook, and execute the first cell in the notebook, which contains the code shown next. Follow the steps mentioned in the Getting ready section to get the application ID, tenant ID, and secret, and replace the values for the variables used in the following code snippet forclientID,tenantID, andclientSecret:#ClientId, TenantId and Secret is for the Application(ADLSGen2App) was have created as part of this recipe clientID =" XXXXXb3dd-4f6e-4XXXX-b6fa-aXXXXXXX00db" tenantID ="xxx-xxx-XXXc-xx-eXXXXXXXXXX" clientSecret ="xxxxxx-xxxxxxxxxx-XXXXXX" oauth2Endpoint = "https://login.microsoftonline.com/{}/oauth2/token".format(tenantID) configs = {"fs.azure.account.auth.type": "OAuth", "fs.azure.account.oauth.provider.type": "org.apache.hadoop.fs.azurebfs.oauth2.ClientCredsTokenProvider", "fs.azure.account.oauth2.client.id": clientID, "fs.azure.account.oauth2.client.secret": clientSecret, "fs.azure.account.oauth2.client.endpoint": oauth2Endpoint} try: dbutils.fs.mount( source = storageEndPoint, mount_point = mountpoint, extra_configs = configs) except: print("Already mounted...."+mountpoint)

- After the preceding steps are executed, the ADLS Gen2 storage account will be mounted to

/mnt/Gen2in DBFS. We can check the folders and files in the storage account by executing the following code:%fs ls /mnt/Gen2

- You can also check the files and folders using the

dbutilscommand, as shown in the following code snippet:display(dbutils.fs.ls("/mnt/Gen2")) - Upon executing the preceding command, you should see all the folders and files you have created in the storage account.

- To ensure we can read the

orders.csvfile from the mounted path, we will execute the following code:df_ord= spark.read.format("csv").option("header",True).load("dbfs:/mnt/Gen2/Orders.csv") - The following code will display the DataFrame's contents:

display(df_ord)

Up to now, we have learned how to mount ADLS Gen2 to DBFS. Now, the following steps show us how to mount an Azure Blob storage account to DBFS and list all files and folders created in the Blob storage account:

- Launch a Databricks workspace, open the

2-1.2.Mounting Azure Blob Storage Container to DBFS.ipynbnotebook, and execute the first cell in the notebook, which contains the following code:#Storage account and key you will get it from the portal as shown in the Cookbook Recipe. storageAccount="cookbookblobstorage" storageKey ="xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx==" mountpoint = "/mnt/Blob" storageEndpoint = "wasbs://rawdata@{}.blob.core.windows.net".format(storageAccount) storageConnSting = "fs.azure.account.key.{}.blob.core.windows.net".format(storageAccount) try: dbutils.fs.mount( source = storageEndpoint, mount_point = mountpoint, extra_configs = {storageConnSting:storageKey}) except: print("Already mounted...."+mountpoint) - After the preceding steps are executed, the ADLS Gen2 storage account will be mounted to

/mnt/Gen2in DBFS. We can check the folders and files available in the storage account by executing the following code:%fs ls /mnt/Blob

- You can also check the files and folders using

dbutils, as shown in the following code snippet:display(dbutils.fs.ls("/mnt/Blob")) - You should see all the folders and files you have created in the storage account as the output of the preceding code.

- Run the following code to read the CSV file from the mount point:

df_ord= spark.read.format("csv").option("header",True).load("dbfs:/mnt/Blob/Orders.csv") - The following code will display the DataFrame's contents:

display(df_ord.limit(10))

The preceding code will display 10 records from the DataFrame.

How it works…

The preferred way of accessing an ADLS Gen2 storage account is by mounting the storage account file system using a service principal and Open Authentication 2.0 (OAuth 2.0). There are other ways of accessing a storage account from a Databricks notebook. These are listed here:

- Using a service principal directly without mounting the file system

- Using a storage key to access the Gen2 storage account directly without mounting

- Using a shared access signature (SAS) token

We will learn about the preceding options in the next recipes. For Azure Blob storage, you have learned how to mount a storage account by using a storage key, but there are other options as well to access an Azure Blob storage account from a Databricks notebook. These are listed here:

- Using the Spark Dataframe application programming interface (API). We will learn about this option in the next recipe.

- Using a Resilient Distributed Dataset (RDD) API. We will not talk about this option as all our examples are using DataFrames, which is the preferred method for loading data in Databricks.

To view files in the mount points, Databricks has provided utilities to interact with the file system, called dbutils. You can perform file system operations such as listing files/folders, copying files, creating directories, and so on. You can find an entire list of operations you can perform by running the following command:

dbutils.fs.help()

The preceding command will list all the operations that can be performed on a file system in Databricks.

There's more…

You can also authenticate to ADLS Gen-2 storage accounts using storage account access key as well, but it is less secure and only preferred in non-production environments. You can get the storage account access key using the same method you have learnt for Azure Blob storage account in the Getting ready section of this recipe. You can run the following steps to Authenticate ADLS Gen-2 using access keys and read Orders.csv data.

Run the following to set the storage account and access key details in variables.

#This is ADLS Gen-2 accountname and access key details

storageaccount="demostoragegen2"

acct_info=f"fs.azure.account.key.{storageaccount}.dfs.core.windows.net"

accesskey="xxx-xxx-xxx-xxx"

print(acct_info)

To authenticate using access key we need to set the notebook session configs by running the following code.

#Setting account credentials in notebook session configs spark.conf.set( acct_info, accesskey)

Run the following code to verify we can authenticate using access key and list the Orders.csv file information.

dbutils.fs.ls("abfss://[email protected]/Orders.csv")

Let's read the Orders.csv file by running through the following code.

ordersDF =spark.read.format("csv").option("header",True).load("abfss://[email protected]/Orders.csv")

In this section you have learnt how to authenticate and read data from ADLS Gen-2 using Storage Account access keys.