Bi-directional LSTMs – BiLSTMs

LSTMs are one of the styles of recurrent neural networks, or RNNs. RNNs are built to handle sequences and learn the structure of them. An RNN does that by using the output generated after processing the previous item in the sequence along with the current item to generate the next output.

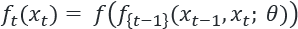

Mathematically, this can be expressed like so:

This equation says that to compute the output at time t, the output at t-1 is used as an input along with the input data xt at the same time step. Along with this, a set of parameters or learned weights, represented by  , are also used in computing the output. The objective of training an RNN is to learn these weights

, are also used in computing the output. The objective of training an RNN is to learn these weights  This particular formulation of an RNN is unique. In previous examples, we have not used the output of a batch to determine the output of a future batch. While we focus on applications of RNNs on language where a sentence is modeled as a sequence of words appearing one after the other, RNNs...

This particular formulation of an RNN is unique. In previous examples, we have not used the output of a batch to determine the output of a future batch. While we focus on applications of RNNs on language where a sentence is modeled as a sequence of words appearing one after the other, RNNs...