RRNs allow you to analyze sequential data in deep learning. Although this network has sequential dependencies, there's plenty of room for optimization. In this section, we will cover its algorithm and how cuDNN provides optimized performance.

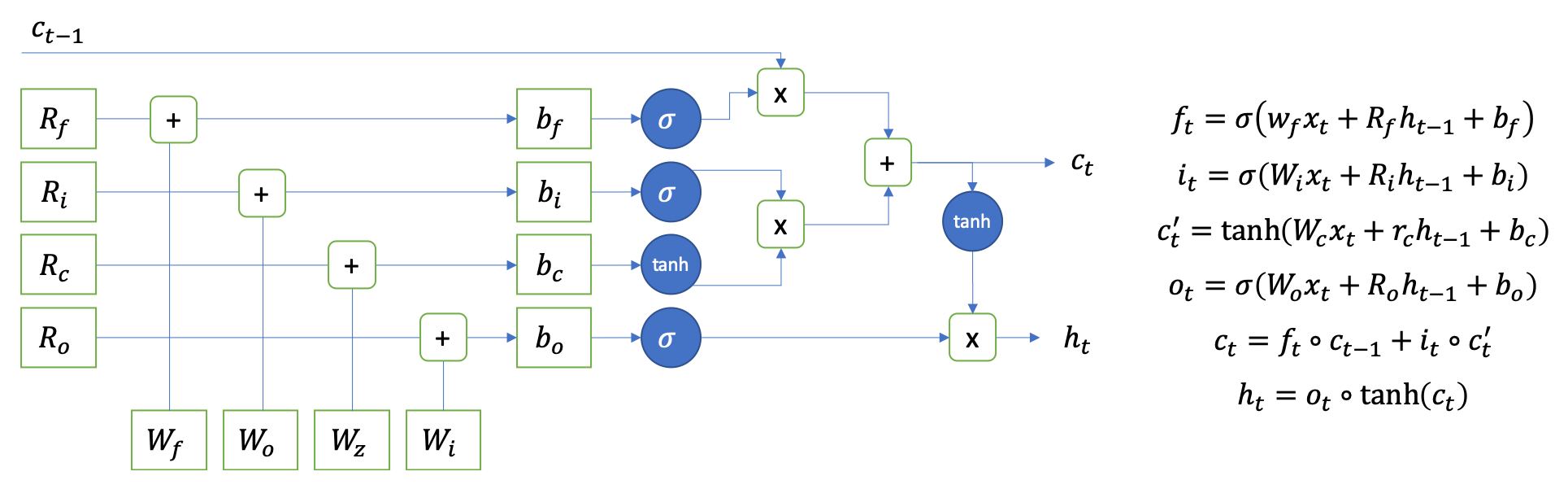

There are many kinds of RNNs, but cuDNN only supports four, that is, RNN with ReLU, RNN with tanh, LSTM, and GRU. They have two inputs: the hidden parameters from the previous network and the input from the source. Depending on their types, they have different operations. In this lab, we will cover the LSTM operation. The following diagram shows the forward operation of the LSTM:

From a computing perspective, there are eight matrix-matrix multiplications and many element-wise operations. From this estimation, we can expect that LSTM could be memory-bounded since each operation is memory...