-

Book Overview & Buying

-

Table Of Contents

Learning PySpark

By :

Learning PySpark

By:

Overview of this book

Free Chapter

Free Chapter

Sign In

Start Free Trial

Sign In

Start Free Trial

Free Chapter

Free Chapter

Now that you have created the swimmersJSON DataFrame, we will be able to run the DataFrame API, as well as SQL queries against it. Let's start with a simple query showing all the rows within the DataFrame.

To do this using the DataFrame API, you can use the show(<n>) method, which prints the first n rows to the console:

Running the.show() method will default to present the first 10 rows.

# DataFrame API swimmersJSON.show()

This gives the following output:

If you prefer writing SQL statements, you can write the following query:

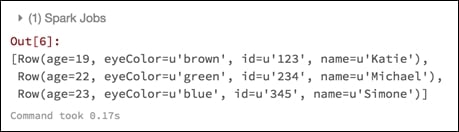

spark.sql("select * from swimmersJSON").collect()This will give the following output:

We are using the .collect() method, which returns all the records as a list of Row objects. Note that you can use either the collect() or show() method for both DataFrames and SQL queries. Just make sure that if you use .collect(), this is for a small DataFrame, since it will return all of the rows in the DataFrame and move them...