Ceph ships with an inbuilt benchmarking tool known as the rados bench, which can be used to measure the performance of a Ceph cluster at the pool level. The rados bench tool supports write, sequential read, and random read benchmarking tests, and it also allows the cleaning of temporary benchmarking data, which is quite neat.

Let's try to run some tests using the rados bench:

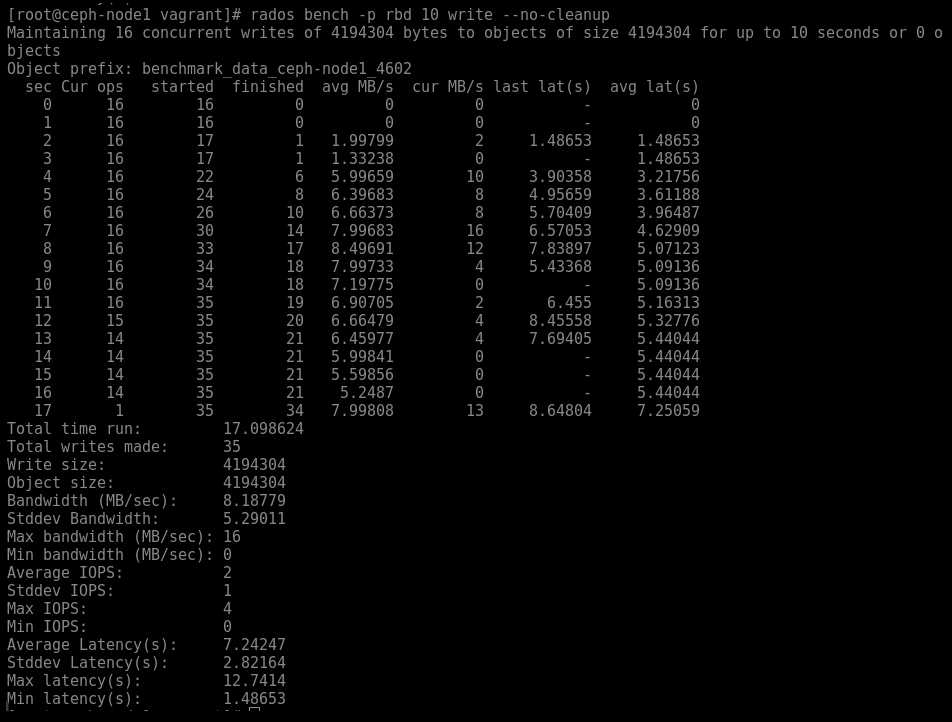

To run a 10 second write test to the pool RDB without cleanup, use the following command:

# rados bench -p rbd 10 write --no-cleanupWe get the following screenshot after executing the command:

You will notice my test actually ran for a total time of 17 seconds, this is due to running the test on VM's and extended time required to complete the write OPS for the test.

- Similarly, to run a 10-second sequential read test on the RBD pool, run the following: