Detectron2 is Facebook (now Meta) AI Research’s open source project. It is a next-generation library that provides cutting-edge detection and segmentation algorithms. Many research and practical projects at Facebook use it as a library to support implementing CV tasks. The following sections introduce Detectron2 and provide an overview of its architecture.

Introducing Detectron2

Detectron2 implements state-of-the-art detection algorithms, such as Mask R-CNN, RetinaNet, Faster R-CNN, RPN, TensorMask, PointRend, DensePose, and more. The question that immediately comes to mind after this statement is, why is it better if it re-implements existing cutting-edge algorithms? The answer is that Detectron2 has the advantages of being faster, more accurate, modular, customizable, and built on top of PyTorch.

Specifically, it is faster and more accurate because while reimplementing the cutting-edge algorithms, there is the chance that Detectron2 will find suboptimal implementation parts or obsolete features from older versions of these algorithms and re-implement them. It is modular, or it divides its implementation into sub-parts. The parts include the input data, backbone network, region proposal heads, and prediction heads (the next section covers more information about these components). It is customizable, meaning its components have built-in implementations, but they can be customized by calling new implementations. Finally, it is built on top of PyTorch, meaning that many developer resources are available online to help develop applications with Detectron2.

Furthermore, Detectron2 provides pre-trained models with state-of-the-art detection results for CV tasks. These models were trained with many images on high computation resources at the Facebook research lab that might not be available in other institutions.

These pre-trained models are published on its Model Zoo and are free to use: https://github.com/facebookresearch/detectron2/blob/main/MODEL_ZOO.md.

These pre-trained models help developers develop typical CV applications quickly without collecting, preparing many images, or requiring high computation resources to train new models. However, suppose there is a need for developing a CV task on a specific domain with a custom dataset. In that case, these existing models can be the starting weights, and the whole Detectron2 model can be trained again on the custom dataset.

Finally, we can convert Detectron2 models into deployable artifacts. Precisely, we can convert Detectron2 models into standard file formats of standard deep learning frameworks such as TorchScript, Caffe2 protobuf, and ONNX. These files can then be deployed to their corresponding runtimes, such as PyTorch, Caffe2, and ONNX Runtime. Furthermore, Facebook AI Research also published Detectron2Go (D2Go), a platform where developers can take their Detectron2 development one step further and create models optimized for mobile devices.

In summary, Detectron2 implements cutting-edge detection algorithms with the advantage of being fast, accurate, modular, and built on top of PyTorch. Detectron2 also provides pre-trained models so users can get started and quickly build CV applications with state-of-the-art results. It is also customizable, so users can change its components or train CV applications on a custom business domain. Furthermore, we can export Detectron2 into scripts supported by standard deep learning framework runtimes. Additionally, initial research called Detectron2Go supports developing Detectron2 applications for edge devices.

In the next section, we will look into Detectron2 architecture to understand how it works and the possibilities of customizing each of its components.

Detectron2 architecture

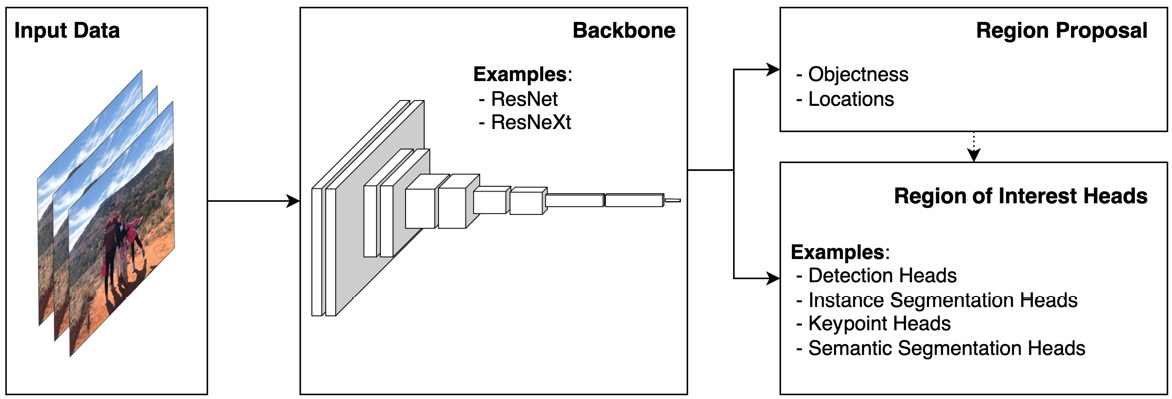

Figure 1.2: The main components of Detectron2

Detectron2 has a modular architecture. Figure 1.2 depicts the four main modules in a standard Detectron2 application. The first module is for registering input data (Input Data).

The second module is the backbone to extract image features (Backbone), followed by the third one for proposing regions with and without objects to be fed to the next training stage (Region Proposal). Finally, the last module uses appropriate heads (such as detection heads, instance segmentation heads, keypoint heads, semantic segmentation heads, or panoptic heads) to predict the regions with objects and classify detected objects into classes. Chapter 3 to Chapter 5 discuss these components for building a CV application for object detection tasks, and Chapter 10 and Chapter 11 detail these components for segmentation tasks. The following sections briefly discuss these components in general.

The input data module

The input data module is designed to load data in large batches from hard drives with optimization techniques such as caching and multi-workers. Furthermore, it is relatively easy to plug data augmentation techniques into a data loader for this module. Additionally, it is designed to be customizable so that users can register their custom datasets. The following is the typical syntax for assigning a custom dataset to train a Detectron2 model using this module:

DatasetRegistry.register(

'my_dataset',

load_my_dataset

)

The backbone module

The backbone module extracts features from the input images. Therefore, this module often uses a cutting-edge convolutional neural network such as ResNet or ResNeXt. This module can be customized to call any standard convolutional neural network that performs well in an image classification task of interest. Notably, this module has a great deal of knowledge about transfer learning. Specifically, we can use those pre-trained models here if we want to use a state-of-the-art convolution neural network that works well with large image datasets such as ImageNet. Otherwise, we can choose those simple networks for this module to increase performance (training and prediction time) with the accuracy trade-off. Chapter 2 will discuss selecting appropriate pre-trained models on the Detectron2 Model Zoo for common CV tasks.

The following code snippet shows the typical syntax for registering a custom backbone network to train the Detectron2 model using this module:

@BACKBONE_REGISTRY.register()

class CustomBackbone(Backbone):

pass

The region proposal module

The next module is the region proposal module (Region Proposal). This module accepts the extracted features from the backbone and predicts or proposes image regions (with location specifications) and scores to indicate whether the regions contain objects (with objectness scores). The objectness score of a proposed region may be 0 (for not having an object or being background) or 1 (for being sure that there is an object of interest in the predicted region). Notably, this object score is not about the probability of being a class of interest but simply whether the region contains an object (of any class) or not (background).

This module is set with a default Region Proposal Network (RPN). However, replacing this network with a custom one is relatively easy. The following is the typical syntax for registering a custom RPN to train the Detectron2 model using this module:

@ROI_BOX_HEAD_REGISTRY.register()

class CustomBoxHead(nn.Module):

pass

Region of interest module

The last module is the place for the region of interest (RoI) heads. Depending on the CV tasks, we can select appropriate heads for this module, such as detection heads, segmentation heads, keypoint heads, or semantic segmentation heads. For instance, the detection heads accept the region proposals and the input features of the proposed regions and pass them through a fully connected network, with two separate heads for prediction and classification. Specifically, one head is used to predict bounding boxes for objects, and another is for classifying the detected bounding boxes into corresponding classes.

On the other hand, semantic segmentation heads also use convolutional neural network heads to classify each pixel into one of the classes of interest. The following is the typical syntax for registering custom region of interest heads to train the Detectron2 model using this module:

@ROI_HEAD_REGISTRY.register()

class CustomHeads(StandardROIHeads):

pass

Now that you have an understanding of Detectron2 and its architecture, let's prepare development environments for developing Detectron2 applications.

Free Chapter

Free Chapter