Overview of this book

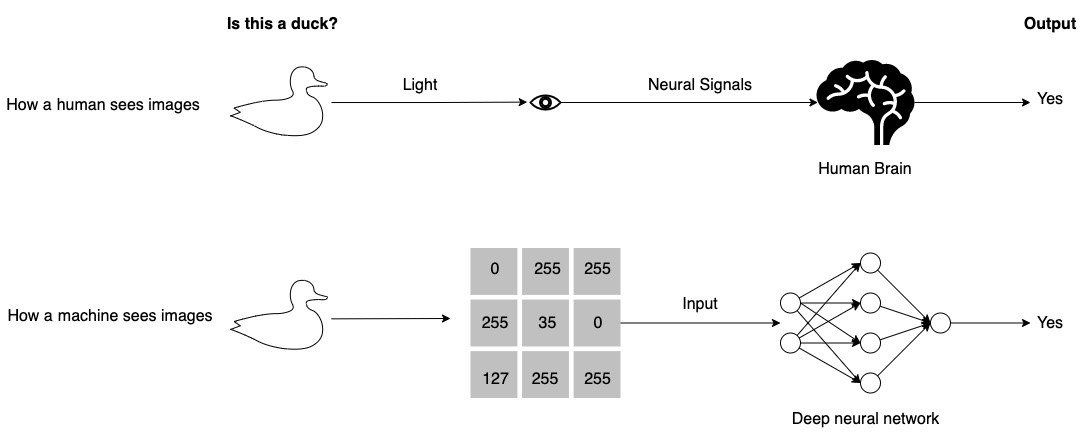

Despite promising advances, the opaque nature of deep learning models makes it difficult to interpret them, which is a drawback in terms of their practical deployment and regulatory compliance.

Deep Learning and XAI Techniques for Anomaly Detection shows you state-of-the-art methods that’ll help you to understand and address these challenges. By leveraging the Explainable AI (XAI) and deep learning techniques described in this book, you’ll discover how to successfully extract business-critical insights while ensuring fair and ethical analysis.

This practical guide will provide you with tools and best practices to achieve transparency and interpretability with deep learning models, ultimately establishing trust in your anomaly detection applications. Throughout the chapters, you’ll get equipped with XAI and anomaly detection knowledge that’ll enable you to embark on a series of real-world projects. Whether you are building computer vision, natural language processing, or time series models, you’ll learn how to quantify and assess their explainability.

By the end of this deep learning book, you’ll be able to build a variety of deep learning XAI models and perform validation to assess their explainability.

Free Chapter

Free Chapter