-

Book Overview & Buying

-

Table Of Contents

Mastering Hadoop

By :

Mastering Hadoop

By:

Overview of this book

Free Chapter

Free Chapter

Sign In

Start Free Trial

Sign In

Start Free Trial

Free Chapter

Free Chapter

A recurring theme that appears in this book is the need to save storage and network data transfer. When dealing with large volumes of data, anything that reduces these two properties gives an efficiency boost both in terms of speed and cost. Compression is one such strategy that can help make a Hadoop-based system efficient.

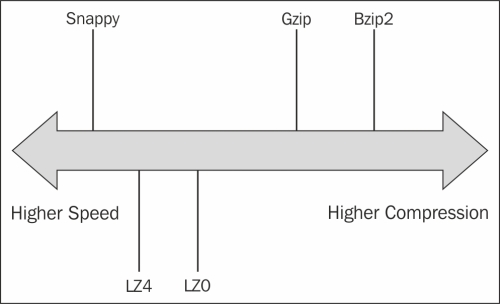

All compression techniques are a tradeoff between speed and space. The higher the space savings, the slower the compression technique, and vice versa. Each compression technique is also tunable for this tradeoff. For example, the gzip compression tool has options -1 to -9, where -1 optimizes for speed and -9 for space.

The following figure shows the different compression algorithms in the speed-space spectrum. The gzip tool does a good job of balancing out both storage and speed. Techniques such as LZO, LZ4, and Snappy are very fast, but their compression ratio is not very good. Bzip2 is a slower technique, but has the best compression.

Codecs are concrete implementations...

Change the font size

Change margin width

Change background colour