-

Book Overview & Buying

-

Table Of Contents

Python Deep Learning - Third Edition

By :

Python Deep Learning

By:

Overview of this book

The field of deep learning has developed rapidly recently and today covers a broad range of applications. This makes it challenging to navigate and hard to understand without solid foundations. This book will guide you from the basics of neural networks to the state-of-the-art large language models in use today.

The first part of the book introduces the main machine learning concepts and paradigms. It covers the mathematical foundations, the structure, and the training algorithms of neural networks and dives into the essence of deep learning.

The second part of the book introduces convolutional networks for computer vision. We’ll learn how to solve image classification, object detection, instance segmentation, and image generation tasks.

The third part focuses on the attention mechanism and transformers – the core network architecture of large language models. We’ll discuss new types of advanced tasks they can solve, such as chatbots and text-to-image generation.

By the end of this book, you’ll have a thorough understanding of the inner workings of deep neural networks. You'll have the ability to develop new models and adapt existing ones to solve your tasks. You’ll also have sufficient understanding to continue your research and stay up to date with the latest advancements in the field.

Table of Contents (17 chapters)

Preface

Part 1:Introduction to Neural Networks

Free Chapter

Free Chapter

Chapter 1: Machine Learning – an Introduction

Chapter 2: Neural Networks

Chapter 3: Deep Learning Fundamentals

Part 2: Deep Neural Networks for Computer Vision

Chapter 4: Computer Vision with Convolutional Networks

Chapter 5: Advanced Computer Vision Applications

Part 3: Natural Language Processing and Transformers

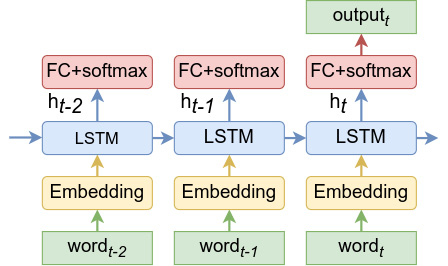

Chapter 6: Natural Language Processing and Recurrent Neural Networks

Chapter 7: The Attention Mechanism and Transformers

Chapter 8: Exploring Large Language Models in Depth

Chapter 9: Advanced Applications of Large Language Models

Part 4: Developing and Deploying Deep Neural Networks

Chapter 10: Machine Learning Operations (MLOps)

Index

, serves as input to an FC...

, serves as input to an FC...