An event is made of two parts, the event header, and the event body. The event header will have a name, the timestamp of the event, and type of event. The event body will have details of the event.

Events can be triggered by the business process or by many different types of activities.

Events are written to a common log. One of the key characteristics of event streaming is that they are strictly ordered (within a partition) and durable. One key difference between Azure Service Bus and events is that the clients don't subscribe to the stream. The client has the flexibility to read from any part of the stream and this opens multiple possibilities.

One major advantage is that a client can read from any part of the stream and the client is solely responsible for advancing their position in the stream. This enables the client to join at any given time and to replay events.

Event correlation is the process of trying to identify the cause of a situation or condition when massive amounts of data points (potentially related to the situation) exist.

If you require receiving and processing millions of events per second, Azure Event Hub is the ideal solution. Typical use cases include tracking and monitoring telemetry collected from an industrial machine, mobile devices, and connected vehicles. For example, in-game events capture in-console applications.

Event Hubs work with low latency and at a massive scale, and serves as the on-ramp for big data:

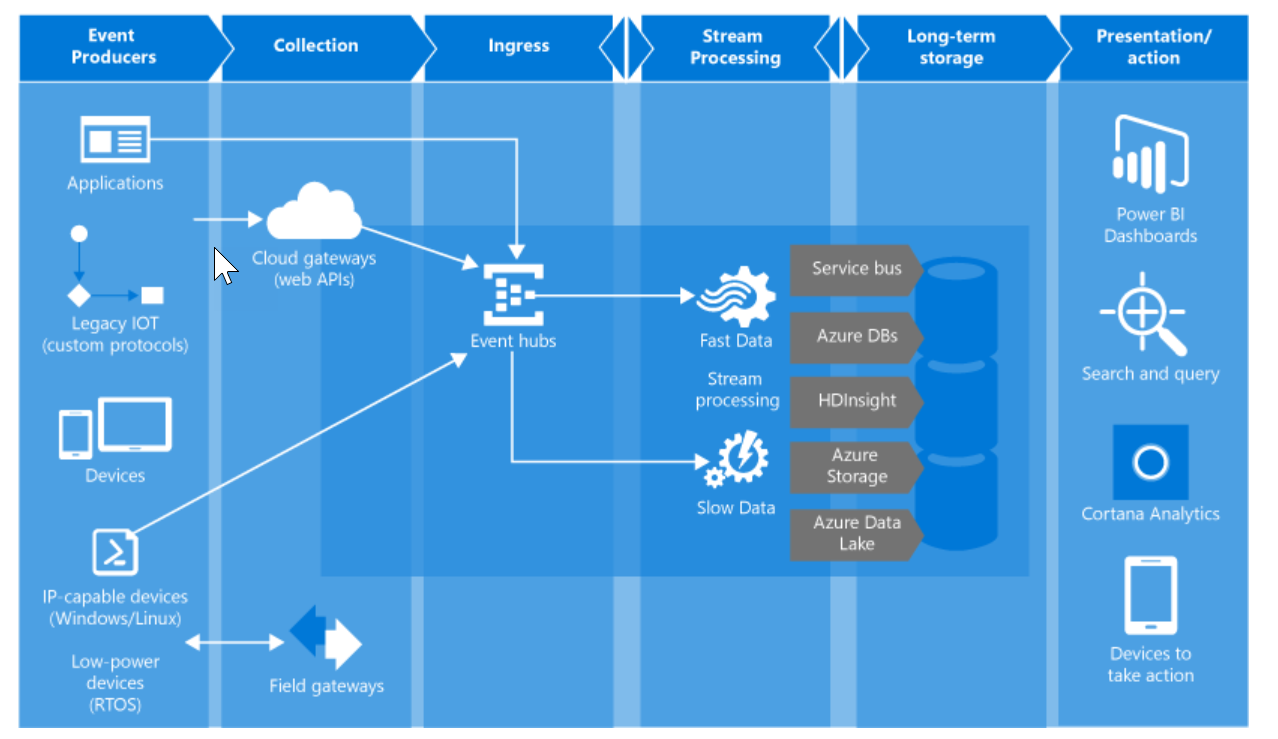

The following screenshot is a canonical implementation of event processing on Azure:

The Advanced Message Queuing Protocol 1.0 is a standardized framing and transfer protocol for asynchronously, securely, and reliably transferring messages between two parties. It is the primary protocol for Azure Service Bus Messaging and Azure Event Hubs. Both services also support HTTPS. The proprietary SBMP protocol that is also supported is being phased out in favor of AMQP.

AMQP 1.0 is the result of broad industry collaboration that brought together middleware vendors, such as Microsoft and Red Hat, with many messaging middleware users such as JP Morgan Chase representing the financial services industry. The technical standardization forum for the Advanced Message Queuing Protocol (AMQP) protocol and extension specifications is OASIS, and it has achieved formal approval as an international standard as ISO/IEC 19494.

Event Hubs contain the following key elements:

- Event producers/publishers: The event can be published via AMQP or HTTPS.

- Capture: Azure Storage Blob item is used as a data storage repository for the events.

- Partitions: If a consumer wants to read a specific subset or partition of the event stream, partitions will provide the required options for the consumer.

- SAS tokens: Identity and authentication for the event publisher are provided by SAS tokens.

- Event consumers (receiver): Event consumers connect using AMQP 1.0. Any entity can read event data from an Event Hub.

- Consumer groups: Consumer groups provide a scale by providing separate views of the event stream. This provides each multiple consuming application with a separate view of the event stream, enabling those consumers to act independently.

- Throughput units: A throughput event provides scaling options. The customer can pre-purchase units of capacity. A single partition has a max scale of one throughput unit.

Azure Service Bus works on the competing consumer pattern. In the competing consumer pattern scenario, multiple consumers will process the messages as illustrated in the image shown as following.

These increases improve scalability and availability, on the same note, this pattern is useful for asynchronous message processing:

Event Hubs, on the other hand, work on the concept of partitions. Event Hub is composed of multiple partitions that will receive messages from publishers. As the volume of messages increase the number of partitions can be increased to handle the additional load.

Having partitions will increase the capacity to handle more messages and also have high throughput:

In summary, real-time streaming is all around us, be it a simple thermostat, your car telemetry, household electric meter data. Data is constantly streamed without anyone realizing it. For instance, when you are driving a car, the onboard computer is constantly doing the calculation on some telemetry data on the fly.

The final decision maker when it comes to the car is the driver that's in the driving seat. The same may not be true in other scenarios with modern day collision avoidance systems. If the onboard computer has enough data points that the car will collide with the car in front, it will decide to slow you down. That's where the real-time decision making comes into play.

The key objective of this book is to get you started on a very strong basis with event processing using Azure; as a reader, you can go on to do bigger and better things using this technology.

Before we dive further into this broad topic, let's see some of the core basics you need to know to get started.

An event-driven architecture can involve building a Publish and Subscribe, Event streaming model and a processing system:

- Publish/Subscribe: The underlying message infrastructure keeps track of subscriptions. Each subscriber will receive an event when it gets published.

- After the event is received, it cannot be replayed and new subscribers cannot see the event. In other words, you get only one opportunity to process the message. There is no way to go back to message to re-process or retry.

- Event streaming: In event streaming, clients are independent of the event producers, and they read from a common logging system.

- The client can read from any part of the system and they are responsible for advancing their posting in the stream.

- It also gives them the flexibility to join at any time and replay events as they want. One key feature set of event streaming is that, in a given partition, they are sequentially ordered and durable.

- If you look at message or event data they are simply data with a timestamp. This data need be processed by applying business logic or rule to derive or create an outcome. There are 3 well-known processing systems:

- Simple event processing

- Event stream processing

- Complex event processing

An event immediately triggers into action in the consumer. For instance, you can use Azure Functions that can execute when it receives a message on the Service Bus topic.

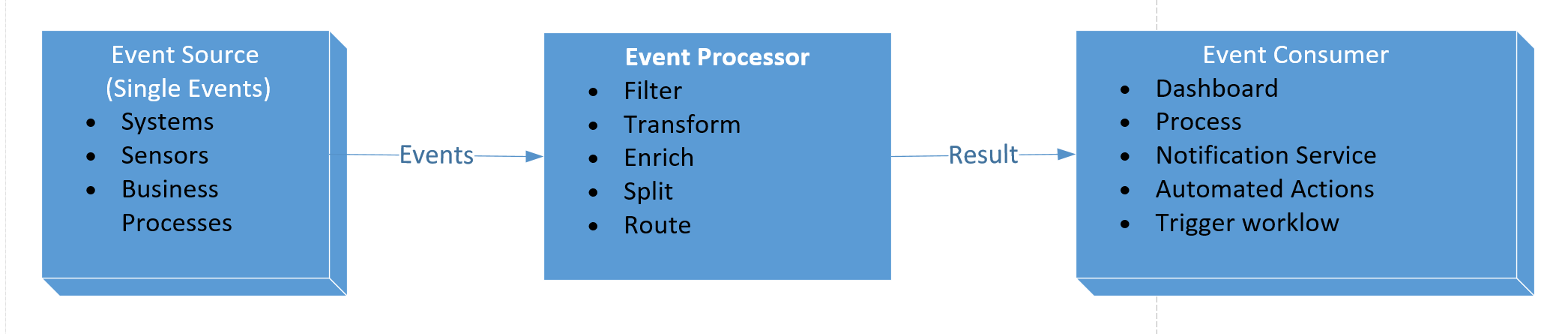

Simple event processing (SEP) is used whenever you need to handle events in a simple way. There are not many differences between the events, the system will just process all of them.

In simple event processing, multiple single events will land into the processing engine. The events will be filtered, transformed, split, and routed. A classic example could be URL matching in a web server. Let's say you have a shopping portal and users can select different products, or click on different or the same products. In that instance, the web server will route the request, or the web server filters the request based on the URL it receives from the interactions and routes it accordingly.

The key characteristic of simple event processing is that a single event is processed without looking at other events. Events are processed at a time.

The following are the stages of the SEP:

- Filter: Filtering the event stream for a specific type of event

- Transform: Transforming events schema from one form to another

- Enrich: Augmenting the event payload with additional data

- Split: Splitting the events into multiple events and processing them

- Route: Moving the event from one channel or stream to another

Continuous streams of data are processed in real time by applying a series of operations (stream processors) on each data point. The event stream processors (ESP) will act to process or transform the stream of data.

For example, one can use data streaming platforms, such as Azure IoT Hub or Apache Kafka, to act as a pipeline to ingest events and feed them to stream processors as showcased in the following illustration. Depending on the scale and complexity, there will be more than one stream processor to work on various subsystems of that given application. This approach is a good fit for clickstream analytics, IoT and device telemetry, credit fraud detection:

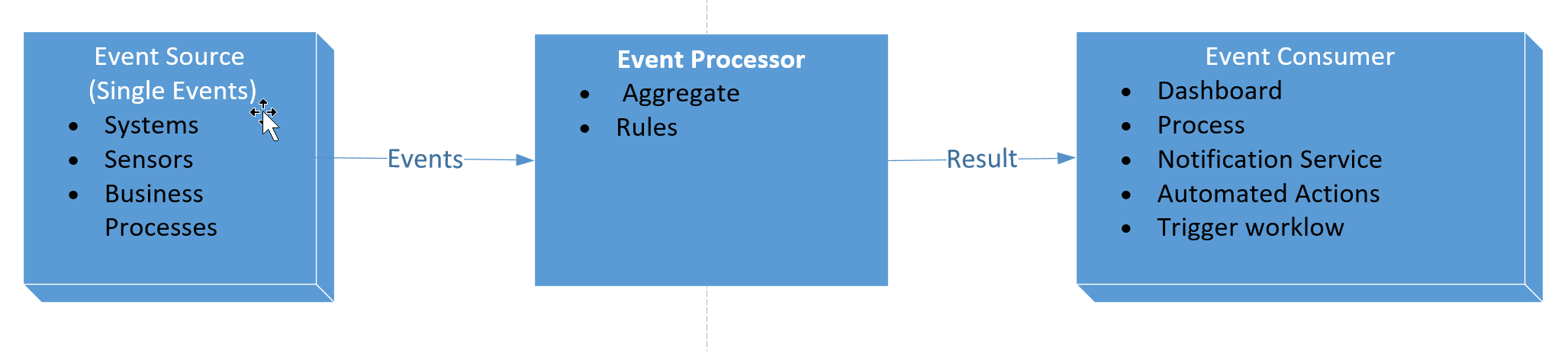

Forrester defines a CEP platform as, a software infrastructure that can detect patterns of events (and expected events that didn’t occur) by filtering, correlating, contextualizing, and analyzing data captured from disparate live data sources to respond as defined using the platform’s development tools.

Complex event processing (CEP) is a subset of event stream processing. CEP enables you to gain insights from large volumes of data in near real-time by monitoring, analyzing, and acting on data while it is in motion. Data is typically generated by business or system events such as placing an order or adding a message to a queue. CEP is the continuous monitoring and processing of events from multiple sources on a near real-time basis. Since CEP enables the analysis of data in real-time, it lends itself to predictive scenarios to enable more proactive decisions.

Typical scenarios may include:

- Monitoring the effectiveness of key performance indicators (KPIs) by using data from event streams

- Monitoring the health and availability of servers, networks and service level threshold compliance

- Fraud detection

- Stock ticker analysis—taking action when certain events occur or price points are achieved

- Performance history—predicting spikes

- Buying patterns (what product/pricing combinations are most popular)

The concept behind CEP is the aggregation of information over a time window or looking for a pattern and generating a notification when the aggregation of data or pattern breaches a defined condition. The emphasis is placed on detection of the event.

CEP has its origins in the stock market and, because of this fact, it is tuned for low latency and often responds in a few milliseconds or sub-milliseconds. Some of the events can be ignored without impact.

Internet of things (IoT) applications are very good to use cases for CEP since they are time series data, auto-correlated. IoT use cases are usually complex and they go beyond aggregation and calculation of data. These types of cases need complex operations such as time windows and temporal query patterns. Due to the availability of temporal operators, it's easy to process time series data efficiently. The following figure illustrations showcase CEP Flow: