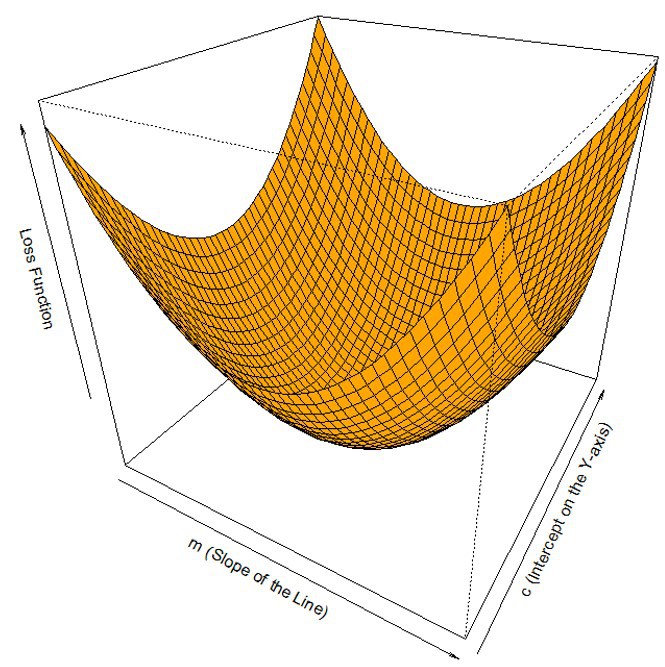

Gradient descent is an algorithm which minimizes functions. A set of parameters defines a function, and the gradient descent algorithm starts with the initial set of param values and iteratively moves toward a set of param values that minimizes the function.

This iterative minimization is achieved using calculus, taking steps in the negative direction of the function gradient, as can be seen in the following diagram:

Gradient descent is the most successful optimization algorithm. As mentioned earlier, it is used to do weights updates in a neural network so that we minimize the loss function. Let's now talk about an important neural network method called backpropagation, in which we firstly propagate forward and calculate the dot product of inputs with their corresponding weights, and then apply an activation function to the sum of products which transforms the input to an output and adds non linearities to the model, which enables the model to learn almost any arbitrary functional...