Linear regression is by far the most widely used, or at least the most commonly known, regression method. The terminology is usually associated with the concept of fitting a model to data and minimizing the errors between the expected and predicted values by computing the sum of square errors, residual sum of square errors, or least-square errors.

The least squares problems fall into the following two categories:

Ordinary least squares

Nonlinear least squares

One-variate linear regression

Let's start with the simplest form of linear regression, which is the single variable regression, in order to introduce the terms and concepts behind linear regression. In its simplest interpretation, the one-variate linear regression consists of fitting a line to a set of data points {x, y}.

Note

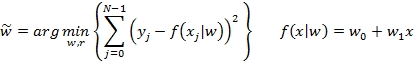

M1: A single variable linear regression for a model f with weights wj for features xj and labels (or expected values) yj is given by the following formula:

Here, w1 is the slope, w0 is the intercept...