Technical requirements

You can find the code for this chapter in the GitHub repository here: https://github.com/PacktPublishing/Data-Ingestion-with-Python-Cookbook.

Installing and running Airflow

This chapter requires that Airflow is installed on your local machine. You can install it directly on your operating system (OS) or by using a Docker image. For more information, refer to the Configuring Docker for Airflow recipe in Chapter 1.

After following the steps described in Chapter 1, ensure your Airflow runs correctly. You can do that by checking the Airflow UI here: http://localhost:8080.

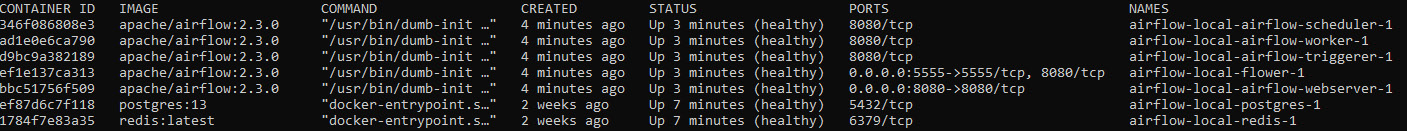

If you are using a Docker container (as I am) to host your Airflow application, you can check its status on the terminal by running the following command:

$ docker ps

You can see the command running here:

Figure 10.1 – Airflow containers running

For Docker, check the container status on Docker Desktop, as shown in the following screenshot:

...