Well, now we're confident about making HTTP requests to multiple URLs. We also looked at a simple example of web scraping.

But WWW is made up of pages with multiple data formats. If we want to scrape the Web and make sense of the data, we should also know how to parse different formats in which data is available on the Web.

In this recipe, we'll discuss how to s.

Data on the Web is mostly in the HTML or XML format. To understand how to parse web content, we'll take an example of an HTML file. We'll learn how to select certain HTML elements and extract the desired data. For this recipe, you need to install the BeautifulSoup module of Python. The BeautifulSoup module is one of the most comprehensive Python modules that will do a good job of parsing HTML content. So, let's get started.

We start by installing

BeautifulSoupon our Python instance. The following command will help us install the module. We install the latest version, which isbeautifulsoup4:pip install beautifulsoup4Now, let's take a look at the following HTML file, which will help us learn how to parse the HTML content:

<html xmlns="http://www.w3.org/1999/html"> <head> <title>Enjoy Facebook!</title> </head> <body> <p> <span>You know it's easy to get intouch with your <strong>Friends</strong> on web!<br></span> Click here <a href="https://facebook.com">here</a> to sign up and enjoy<br> </p> <p class="wow"> Your gateway to social web! </p> <div id="inventor">Mark Zuckerberg</div> Facebook, a webapp used by millions </body> </html>Let's name this file as

python.html. Our HTML file is hand-crafted so that we can learn the multiple ways of parsing it to get the required data from it.Python.htmlhas typical HTML tags given as follows:<head>- It is the container of all head elements like<title>.<body>- It defines the body of the HTML document.<p>- This element defines a paragraph in HTML.<span>- It is used to group inline elements in a document.<strong>- It is used to apply a bold style to the text present under this tag.<a>- It represents a hyperlink or anchor and contains<href>that points to the hyperlink.<class>- It is an attribute that points to a class in a style sheet.<div id>- It is a container that encapsulates other page elements and divides the content into sections. Every section can be identified by attributeid.

If we open this HTML in a browser, this is how it'll look:

Let's now write some Python code to parse this HTML file. We start by creating a

BeautifulSoupobject.Tip

We always need to define the parser. In this case we used

lxmlas the parser. The parser helps us read files in a designated format so that querying data becomes easy.import bs4 myfile = open('python.html') soup = bs4.BeautifulSoup(myfile, "lxml") #Making the soup print "BeautifulSoup Object:", type(soup)The output of the preceding code is seen in the following screenshot:

OK, that's neat, but how do we retrieve data? Before we try to retrieve data, we need to select the HTML elements that contain the data we need.

We can select or find HTML elements in different ways. We could select elements with ID, CSS, or tags. The following code uses

python.htmlto demonstrate this concept:#Find Elements By tags print soup.find_all('a') print soup.find_all('strong') #Find Elements By id print soup.find('div', {"id":"inventor"}) print soup.select('#inventor') #Find Elements by css print soup.select('.wow')The output of the preceding code can be viewed in the following screenshot:

Now let's move on and get the actual content from the HTML file. The following are a few ways in which we can extract the data of interest:

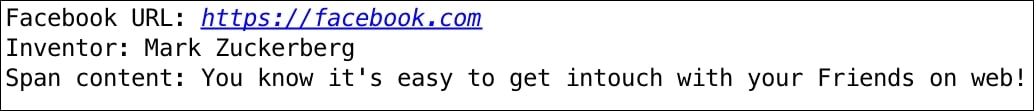

print "Facebook URL:", soup.find_all('a')[0]['href']

print "Inventor:", soup.find('div', {"id":"inventor"}).text

print "Span content:", soup.select('span')[0].getText()The output of the preceding code snippet is as follows:

Whoopie! See how we got all the text we wanted from the HTML elements.

In this recipe, you learnt the skill of finding or selecting different HTML elements based on ID, CSS, or tags.

In the second code example of this recipe, we used find_all('a') to get all the anchor elements from the HTML file. When we used the find_all() method, we got multiple instances of the match as an array. The select() method helps you reach the element directly.

We also used find('div', <divId>) or select(<divId>) to select HTML elements by div Id. Note how we selected the inventor element with div ID #inventor in two ways using the find() and select() methods. Actually, the select method can also be used as select(<class-name>) to select HTML elements with a CSS class name. We used this method to select element wow in our example.

In the third code example, we searched for all the anchor elements in the HTML page and looked at the first index with soup.find_all('a')[0]. Note that since we have only one anchor tag, we used the index 0 to select that element, but if we had multiple anchor tags, it could be accessed with index 1. Methods like getText() and attributes like text (as seen in the preceding examples) help in extracting the actual content from the elements.

Cool, so we understood how to parse a web page (or an HTML page) with Python. You also learnt how to select or find HTML elements by ID, CSS, or tags. We also looked at examples of how to extract the required content from HTML. What if we want to download the contents of a page or file from the Web? Let's see if we can achieve that in our next recipe.