If you realize, many web applications that we interact with, are by default synchronous. A client connection gets established for every request made by the client and a callable method gets invoked on the server side. The server performs the business operation and writes the response body to the client socket. Once the response is exhausted, the client connection gets closed. All these operations happen in sequence one after the other--hence, synchronous.

But the Web today, as we see it, cannot rely on synchronous modes of operations only. Consider the case of a website that queries data from the Web and retrieves the information for you. (For instance, your website allows for integration with Facebook and every time a user visits a certain page of your website, you pull data from his Facebook account.) Now, if we develop this web application in a synchronous manner, for every request made by the client, the server would make an I/O call to either the database or over the network to retrieve information and then present it back to the client. If these I/O requests take a longer time to respond, the server gets blocked waiting for the response. Typically web servers maintain a thread pool that handles multiple requests from the client. If a server waits long enough to serve requests, the thread pool may get exhausted soon and the server will get stalled.

Solution? In comes the asynchronous ways of doing things!

For this recipe, we will use Tornado, an asynchronous framework developed in Python. It has support for both Python 2 and Python 3 and was originally developed at FriendFeed (http://blog.friendfeed.com/). Tornado uses a non-blocking network I/O and solves the problem of scaling to tens of thousands of live connections (C10K problem). I like this framework and enjoy developing code with it. I hope you'd too! Before we get into the How to do it section, let's first install tornado by executing the following command:

pip install -U tornado

We're now ready to develop our own HTTP server that works on an asynchronous philosophy. The following code represents an asynchronous server developed in the

tornadoweb framework:import tornado.ioloop import tornado.web import httplib2 class AsyncHandler(tornado.web.RequestHandler): @tornado.web.asynchronous def get(self): http = httplib2.Http() self.response, self.content = http.request("http://ip.jsontest.com/", "GET") self._async_callback(self.response, self.content) def _async_callback(self, response, content): print "Content:", content print "Response:\nStatusCode: %s Location: %s" %(response['status'], response['content-location']) self.finish() tornado.ioloop.IOLoop.instance().stop() application = tornado.web.Application([ (r"/", AsyncHandler)], debug=True) if __name__ == "__main__": application.listen(8888) tornado.ioloop.IOLoop.instance().start()Run the server as:

python tornado_async.pyThe server is now running on port 8888 and ready to receive requests.

Now, launch any browser of your choice and browse to

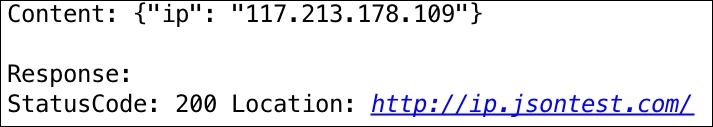

http://localhost:8888/. On the server, you'll see the following output:

Our asynchronous web server is now up and running and accepting requests on port 8888. But what is asynchronous about this? In fact, tornado works on the philosophy of a single-threaded event loop. This event loop keeps polling for events and passes it on to the corresponding event handlers.

In the preceding example, when the app is run, it starts by running the ioloop. The ioloop is a single-threaded event loop and is responsible for receiving requests from the clients. We have defined the get() method, which is decorated with @tornado.web.asynchronous, which makes it asynchronous. When a user makes a HTTP GET request on http://localhost:8888/, the get() method is triggered that internally makes an I/O call to http://ip.jsontest.com.

Now, a typical synchronous web server would wait for the response of this I/O call and block the request thread. But tornado being an asynchronous framework, it triggers a task, adds it to a queue, makes the I/O call, and returns the thread of execution back to the event loop.

The event loop now keeps monitoring the task queue and polls for a response from the I/O call. When the event is available, it executes the event handler, async_callback(), to print the content and its response and then stops the event loop.

Event-driven web servers such as tornado make use of kernel-level libraries to monitor for events. These libraries are kqueue, epoll, and so on. If you're really interested, you should do more reading on this. Here are a few resources: