Chapter 1, Introduction to Clustering, covered both the high-level concepts and in-depth details of one of the most basic clustering algorithms: k-means. While it is indeed a simple approach, do not discredit it; it will be a valuable addition to your toolkit as you continue your exploration of the unsupervised learning world. In many real-world use cases, companies experience valuable discoveries through the simplest methods, such as k-means or linear regression (for supervised learning). An example of this is evaluating a large selection of customer data – if you were to evaluate it directly in a table, it would be unlikely that you'd find anything helpful. However, even a simple clustering algorithm can identify where groups within the data are similar and dissimilar. As a refresher, let's quickly walk through what clusters are and how k-means works to find them:

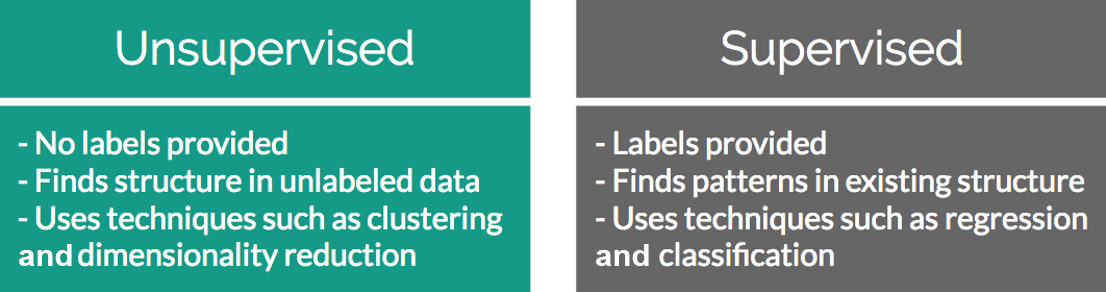

Figure 2.1: The attributes that separate supervised and unsupervised problems

If you were given a random collection of data without any guidance, you would probably start your exploration using basic statistics – for example, the mean, median, and mode values for each of the features. Given a dataset, choosing supervised or unsupervised learning as an approach to derive insights is dependent on the data goals that you have set for yourself. If you were to determine that one of the features was actually a label and you wanted to see how the remaining features in the dataset influence it, this would become a supervised learning problem. However, if, after initial exploration, you realized that the data you have is simply a collection of features without a target in mind (such as a collection of health metrics, purchase invoices from a web store, and so on), then you could analyze it through unsupervised methods.

A classic example of unsupervised learning is finding clusters of similar customers in a collection of invoices from a web store. Your hypothesis is that by finding out which people are the most similar, you can create more granular marketing campaigns that appeal to each cluster's interests. One way to achieve these clusters of similar users is through k-means.

The k-means Refresher

The k-means clustering works by finding "k" number of clusters in your data through certain distance calculations such as Euclidean, Manhattan, Hamming, Minkowski, and so on. "K" points (also called centroids) are randomly initialized in your data and the distance is calculated from each data point to each of the centroids. The minimum of these distances designates which cluster a data point belongs to. Once every point has been assigned to a cluster, the mean intra-cluster data point is calculated as the new centroid. This process is repeated until the newly calculated cluster centroid no longer changes position or until the maximum limit of iterations is reached.

Free Chapter

Free Chapter