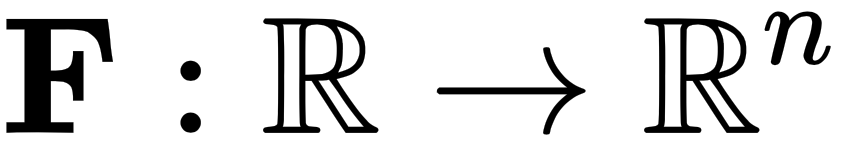

When we find derivatives of functions with respect to vectors, we need to be a lot more diligent. And as we will see in Chapter 2, Linear Algebra, vectors and matrices are noncommutative and behave quite differently from scalars, and so we need to find a different way to differentiate them.

-

Book Overview & Buying

-

Table Of Contents

Hands-On Mathematics for Deep Learning

By :

Hands-On Mathematics for Deep Learning

By:

Overview of this book

Most programmers and data scientists struggle with mathematics, having either overlooked or forgotten core mathematical concepts. This book uses Python libraries to help you understand the math required to build deep learning (DL) models.

You'll begin by learning about core mathematical and modern computational techniques used to design and implement DL algorithms. This book will cover essential topics, such as linear algebra, eigenvalues and eigenvectors, the singular value decomposition concept, and gradient algorithms, to help you understand how to train deep neural networks. Later chapters focus on important neural networks, such as the linear neural network and multilayer perceptrons, with a primary focus on helping you learn how each model works. As you advance, you will delve into the math used for regularization, multi-layered DL, forward propagation, optimization, and backpropagation techniques to understand what it takes to build full-fledged DL models. Finally, you’ll explore CNN, recurrent neural network (RNN), and GAN models and their application.

By the end of this book, you'll have built a strong foundation in neural networks and DL mathematical concepts, which will help you to confidently research and build custom models in DL.

Table of Contents (19 chapters)

Preface

Section 1: Essential Mathematics for Deep Learning

Free Chapter

Free Chapter

Linear Algebra

Vector Calculus

Probability and Statistics

Optimization

Graph Theory

Section 2: Essential Neural Networks

Linear Neural Networks

Feedforward Neural Networks

Regularization

Convolutional Neural Networks

Recurrent Neural Networks

Section 3: Advanced Deep Learning Concepts Simplified

Attention Mechanisms

Generative Models

Transfer and Meta Learning

Geometric Deep Learning

Other Books You May Enjoy

—that is, it takes in a scalar value as input and outputs a vector. So, the derivative of F is defined...

—that is, it takes in a scalar value as input and outputs a vector. So, the derivative of F is defined...