Let's get an instance of CartPole-v1 running.

We import the necessary package and build the environment with the call to gym.make(), as shown in the following code snippet:

import gym

env = gym.make('CartPole-v1')

env.reset()

done = False

while not done:

env.render()

# Take a random action

observation, reward, done, info = env.step(env.action_space.sample())

We run a loop that renders the environment for a maximum of 1,000 steps. It takes random actions at each timestep using env.step().

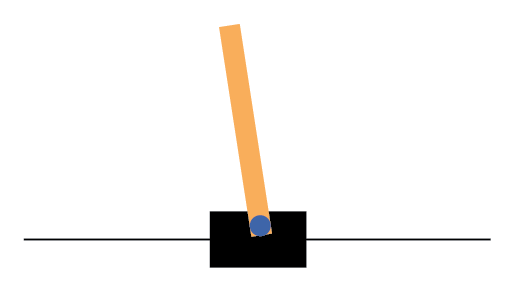

Here's the output displaying the CartPole-v1 environment:

You should see a small window pop up with the CartPole environment displayed.

Because we're taking random actions in our current simulation, we're not taking any of the information about the pole's direction or velocity into account. The pole will quickly...